Performance Schema … How to (Part1)

Performance Schema (PS) has been the subject of many, many recent discussions, presentations, and articles. After its release in MySQL 5.7, PS has become the main actor for people who want to take the further steps in MySQL monitoring. At the same time, it has become clear that Oracle intends to make PS powerful with so many features and new instrumentation that old-style monitoring will begin to look like obsolete tools from the Stone Age.

This article will explain PS and provide guidance on what needs to be done in order to use it effectively.

What I am not going to do is to dig into specific performance issues or address polemics about what PS is and what, in a Utopian vision, it should be. I have seen too many presentations, articles and comments like this and they are not productive, nor are they in line with my target which is: keep people informed on how to do things EASILY.

For the scope of this article I will base my code mainly on version MySQL 5.7, with some digression to MySQL 5.6, if and when it makes sense.

Basic Concepts

Before starting the real how-to, it is my opinion that we must cover a few basic concepts and principles about PS. The primary goal of the Performance Schema is to measure (instrument) the execution of the server. A good measure should not cause any change in behavior. To achieve this, the overall design of the Performance Schema complies with the following, very severe design constraints:

- The parser is unchanged. Also, there are no new keywords or statements. This guarantees that existing applications will run the same way with or without the Performance Schema.

- All the instrumentation points return "void", there are no error codes. Even if the performance schema fails internally, execution of the server code will proceed.

- None of the instrumentation points allocate memory. All the memory used by the Performance Schema is pre-allocated at startup, and is considered "static" during the server life time.

- None of the instrumentation points use any pthread_mutex, pthread_rwlock, or pthread_cond (or platform equivalents). Executing the instrumentation point should not cause thread scheduling to change in the server.

In other words, the implementation of the instrumentation points, including all the code called by the instrumentation points is:

- Malloc free

- Mutex free

- Rwlock free

Currently, there is still an issue with the usage of the LF_HASH, which introduces memory allocation, though a plan exists to be replace it with lock-free/malloc-free hash code table.

The observer should not influence the one observe. As such, the PS must be as fast as possible, while being less invasive. In cases when there are choices between:

Processing when recording the performance data in the instrumentation.

OR

Processing when retrieving the performance data.

Priority is given in the design to make the instrumentation faster, pushing some complexity to data retrieval.

Performance schema was designed while keeping an eye on future developments and how to facilitate the PS usage in new code. As such, to make it more successful, the barrier of entry for a developer should be low, so it is easy to instrument code. This is particularly true for the instrumentation interface. The interface is available for C and C++ code, so it does not require parameters that the calling code cannot easily provide, supports partial instrumentation (for example, instrumenting mutexes does not require that every mutex is instrumented). The Performance Schema instrument interface is designed in such a way that any improvement/additions in the future will not require modifications, as well as old instrumentation remaining unaffected by the changes.

The final scope for PS is to have it implemented in any plugin included in MySQL, although pretending to have them always using the latest version will be unrealistic in most cases. Given that the Performance Schema implementation must provide up to date support, within the same deployment, multiple versions of the instrumentation interface must ensure binary compatibility with each version.

The importance of flexibility means we may have conditions like:

- Server supporting the Performance Schema + a storage engine that is instrumented.

- Server supporting the Performance Schema + a storage engine that is not instrumented.

- Server not supporting the Performance Schema + a storage engine that is instrumented.

Finally, we need to take in to account that the Performance Schema can be included or excluded from the server binary, using build time configuration options, with exposure in the compiling interface.

Performance Schema Interfaces

As mentioned above, PS can be excluded from code at the moment of the code compilation, thanks to the PS compile interface. This interface is one of seven that are present in PS. The full list is:

- Instrument interface

- Compiling interface

- Server bootstrap interface

- Server startup interface

- Runtime configuration interface

- Internal audit interface

- Query interface

Instrument Interface:

This is the one that allows plugin implementers to add their instruments to PS. In general the interface is available for:

- C implementations

- C++ implementations

- The core SQL layer (/sql)

- The mysys library (/mysys)

- MySQL plugins, including storage engines,

- Third party plugins, including third party storage engines.

Compiling Interface:

As mentioned earlier, this is used during the build and will include or exclude PS code from the binaries.

Server Bootstrap Interface:

This is an internal private interface, which has the scope to provide access to the instructions demanded and create the tables for the PS itself.

Server Startup Interface:

This interface will expose options used with the mysqld command line or in the my.cnf, required to:

- Enable or disable the performance schema.

- Specify some sizing parameters.

Runtime Configuration Interface

This is one of the two most important interfaces for DBAs and SAs. It will allow the configuration of the PS at runtime. Using the methods expose by this interface, we will be able to configure what instruments, consumers, users and more we want to have active. This interface uses standard SQL and is very easy to access and use. Also, it is the preferred method to activate or deactivate instruments. Thus, when we start the server we should always enable the PS with all the instruments and consumers deactivated, and use this interface to choose only the ones we are interested in.

Internal Audit Interface:

The internal audit interface is provided to the DBA to inspect if the Performance Schema code itself is functioning properly. This interface is necessary because a failure caused while instrumenting code in the server should not cause failures in the MySQL server itself, and in turn the performance schema implementation never raises errors during runtime execution. To access the information a DBA just needs to issue the SHOW ENGINE PERFORMANCE SCHEMA STATUS; command.

Query Interface:

Lastly, this interface is the one that allows us to access the collected data, and to perform data filtering, grouping, join, etc. It will also allow access to a special table like the summary tables and digest, which will be discussed later on.

Consumers and Instruments

Another important concept in PS to understand is the difference between Instruments and Consumers.

Instruments:

Instruments are the ones collecting raw data where the calls are embedded in the code, such as:

MYSQL_TABLE_IO_WAIT(PSI_TABLE_FETCH_ROW, active_index, result, { result= index_prev(buf); })

In this case the code refers to the MYSQL_TABLE_IO_WAIT function declared in the handler.cc class (<mysql_root_code>/sql/handler.cc). If enabled in the compilation phase the above function will provide PS the information related to specific table io_wait.

The instruments demanded to manage that data collection is: wait/io/table/sql/handler.

The naming convention for the instruments is quite easy. The first part wait is the name of the Top-level Instrument component (list later), the second io is the observed condition, and table is the object. The remaining suffix is referring to more specific plugin implementations and includes innodb, myisam, sql or names like IO_CACHE::append_buffer_lock. In the above example it refers to the Handler class in SQL tree.

Instruments are organized by top level components like:

- Idle: An instrumented idle event. This instrument has no further components.

- Memory: An instrumented memory event.

- Stage: An instrumented stage event.

- Statement: An instrumented statement event.

- Transaction: An instrumented transaction event. This instrument has no further components.

- Wait: An instrumented wait event.

Each top level has an n number of instruments:

+-------------+------+ | name | Numb | +-------------+------+ | idle | 1 | | memory | 367 | | stage | 117 | | statement | 191 | | transaction | 1 | | wait | 297 | +-------------+------+

We can and should keep in consideration that, it is best practice to enable only the instruments we may require for the time we need them. This can be achieved using the re-using the runtime interface (I will explain how exactly later on).

There exists official documentation (http://dev.mysql.com/doc/refman/5.7/en/performance-schema-instrument-naming.html) providing more detailed information about the list of what is available for each Top Component.

Consumers:

The Consumers are the destination of the data collected from the instruments. Consumers have different scope and timelines. Also, consumer like event statements has many different tables like:

- Current

- History

- History long

- Summaries (by different aggregation)

- Summary Digest (like what we can find by processing the slow query log)

Once more it is important to define what we are looking for and enable only what we need. For instance, if we need to review/identify the SQL with the most impacting, we should enable only the events_statements_current, events_statements_history and events_statements_summary_by_digest. All the other consumers can stay off. It is also important to keep in mind that each event may have a relation with another one. In this case, we will be able to navigate the tree relating the events using the fields EVENT_ID and NESTING_EVENT_ID where the last one is the EVENT_ID of the parent.

Pre-Filtering vs. Post-filtering

We are almost there, stay tight! Another important concept to understand is the difference between post and pre-filtering. As I mentioned, we can easily query the Consumer tables with SQL, we can create complex SQL to join tables and generate complex reports. But this can be quite heavy and resource consuming, especially if we want to dig on specific sections of our MySQL server.

In this case we can use the pre-filtering approach. The pre-filtering is basically a way to tell to PS to collect information ONLY from a specific source like user/IP (actors) or Object(s) like Tables, Triggers, Events, and Functions. The last one can be set at a general level or down to a specific object name.

The pre-filtering with the activation of the right instruments and consumer is a powerful way to collect the information without overloading the server with useless data. It is also very easy to implement given we just need to set the objects and/or actors in the setup tables as we like.

Rolling the Ball, Setup the PS for Observation as Start

Now that we have covered the basic concepts we can start to work on the real implementation.

Compile the Source Code:

As mentioned earlier, we can use the compile interface to include or exclude features from the code compilation. The available options are:

- DISABLE_PSI_COND Exclude Performance Schema condition instrumentation

- DISABLE_PSI_FILE Exclude Performance Schema file instrumentation

- DISABLE_PSI_IDLE Exclude Performance Schema idle instrumentation

- DISABLE_PSI_MEMORY Exclude Performance Schema memory instrumentation

- DISABLE_PSI_METADATA Exclude Performance Schema metadata instrumentation

- DISABLE_PSI_MUTEX Exclude Performance Schema mutex instrumentation

- DISABLE_PSI_RWLOCK Exclude Performance Schema rwlock instrumentation

- DISABLE_PSI_SOCKET Exclude Performance Schema socket instrumentation

- DISABLE_PSI_SP Exclude Performance Schema stored program instrumentation

- DISABLE_PSI_STAGE Exclude Performance Schema stage instrumentation

- DISABLE_PSI_STATEMENT Exclude Performance Schema statement instrumentation

- DISABLE_PSI_STATEMENT_DIGEST Exclude Performance Schema statement_digest instrumentation

- DISABLE_PSI_TABLE Exclude Performance Schema table instrumentation

This level of detail is so granular that we can only include the things we are planning to use.

The positive aspect of doing so at the compilation level is that we will be sure no one will mess-up adding undesired instruments. The drawback is that if we change our mind and we decide we may need the ones we had excluded, we will have to compile the whole server again.

As a result, I would say that using this approach is not for someone that is just starting to use PS. Given you are still discovering what is there, it make sense to compile with all the features (default).

Configure PS in my.cnf:

To set the PS correctly in the my.cnf is quite important, so I strongly suggest disabling any instrument and consumer at the start-up. They can be enabled by the script later, and that would be much safer for a production database.

I normally recommend a section like the following:

performance_schema=1 performance_schema_events_waits_history_size=50 performance_schema_events_waits_history_long_size=15000 performance_schema_instrument='%=OFF' performance_schema_consumer_events_stages_current=0 performance_schema_consumer_events_stages_history=0 performance_schema_consumer_events_stages_history_long=0 performance_schema_consumer_events_statements_current=0 performance_schema_consumer_events_statements_history=0 performance_schema_consumer_events_statements_history_long=0 performance_schema_consumer_events_transactions_current=0 performance_schema_consumer_events_transactions_history=0 performance_schema_consumer_events_transactions_history_long=0 performance_schema_consumer_events_waits_current=0 performance_schema_consumer_events_waits_history=0 performance_schema_consumer_events_waits_history_long=0 performance_schema_consumer_global_instrumentation=0 performance_schema_consumer_thread_instrumentation=0 performance_schema_consumer_statements_digest=0

The settings above will start the server with PS as “enabled”, but all the instruments and consumer will be OFF. Well, this is not entirely true, as for the moment of the writing (MySQL 5.7.7) once the PS is enabled the instruments related to memory/performance_schema are enabled regardless, which make sense given they are dedicated to monitor the memory utilization of PS.

A final note about the configuration is that we can decide to use the counting option of the instruments instead, capturing the latency time. To do so, we just have to declare it as: performance_schema_instrument='statement/sql/%=COUNTED'

In this case I had set that ALL the SQL statements should be counted.

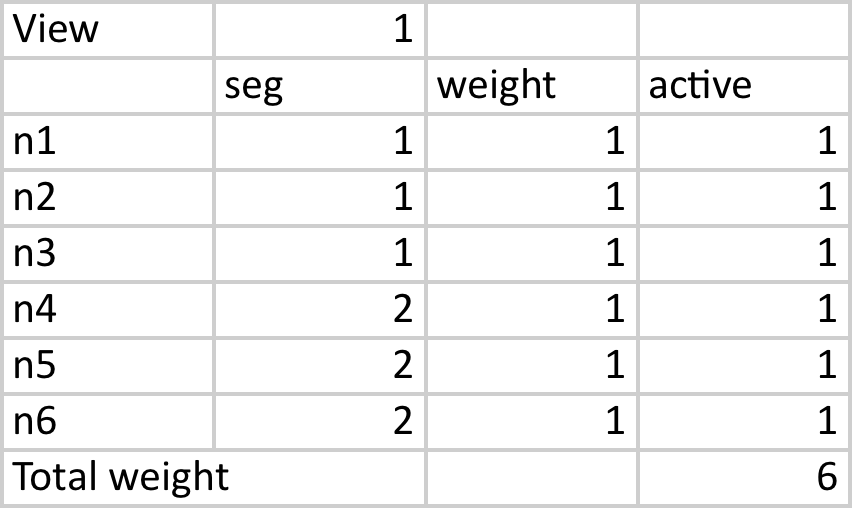

Start Server and Set Only the Users We Need:

Once we have started our MySQL server, we are almost ready to go.

This is it, given we start it with NO instruments, we have to decide where to begin, and given we all know the most impacting factor in a database server is how we query it, we will start from there. In turn, analyzing what is going from the SQL point of view. Although, I want to catch the work coming from my application user, not from everywhere. Given this we can set the user in the actor table. This is very simple given we will use the Runtime configuration interface which uses SQL syntax.

So, let say I want to trace only my application user named stress running from machines in the 10.0.0.0/24 range. I will need to:

UPDATE setup_actors SET ENABLED='NO' WHERE user='%'; INSERT INTO setup_actors VALUES('10.0.0.%','stress','%','YES'); (root@localhost) [performance_schema]>select * FROM setup_actors; +----------+--------+------+---------+ | HOST | USER | ROLE | ENABLED | +----------+--------+------+---------+ | % | % | % | NO | | 10.0.0.% | stress | % | YES | +----------+--------+------+---------+ 2 rows IN SET (0.00 sec)

Great, from now on PS will only focus on my user stress, so now let us decide what to enable for instruments and consumers.

Once more using SQL command we will enable all the instruments related to SQL statements, but wait a minute, if you check the instrument table, you will see we have several variations of the statements instrument:

- SQL

- SP

- Scheduler

- Com

- Abstract

Also, this is not included but relevant is the TRANSACTION. For now, we will only enable the SQL, ABSTRACT, Scheduler and Transaction.

SQL will be:

UPDATE setup_instruments SET ENABLED='YES' WHERE ENABLED='NO' AND name LIKE 'statement/abstract/%'; UPDATE setup_instruments SET ENABLED='YES' WHERE ENABLED='NO' AND name LIKE 'statement/sql/%'; UPDATE setup_instruments SET ENABLED='YES' WHERE ENABLED='NO' AND name LIKE 'transaction'; (root@localhost) [performance_schema]>select count(*) FROM setup_instruments

WHERE ENABLED = 'YES' AND name NOT LIKE 'memory%'; +----------+ | count(*) | +----------+ | 143 | +----------+ 1 row IN SET (0.01 sec)

We have 143 instruments active. Now we must setup the consumers and choose the destination that will receive the data.

The list of consumers is the following:

(root@localhost) [performance_schema]>select * FROM setup_consumers; +----------------------------------+---------+ | NAME | ENABLED | +----------------------------------+---------+ | events_stages_current | NO | | events_stages_history | NO | | events_stages_history_long | NO | | events_statements_current | NO | | events_statements_history | NO | | events_statements_history_long | NO | | events_transactions_current | NO | | events_transactions_history | NO | | events_transactions_history_long | NO | | events_waits_current | NO | | events_waits_history | NO | | events_waits_history_long | NO | | global_instrumentation | NO | | thread_instrumentation | NO | | statements_digest | NO | +----------------------------------+---------+ 15 rows IN SET (0.00 sec)

To enable ANY of them, first we have to enable the GLOBAL one, which works as a global power on/off. The same thing applies for the Thread instrumentation:

UPDATE setup_consumers SET ENABLED='YES' WHERE NAME='global_instrumentation'; UPDATE setup_consumers SET ENABLED='YES' WHERE NAME='thread_instrumentation';

Then we need to activate at least the events_statements_current to see something, I suggest activating also history and statements_digest.

UPDATE setup_consumers SET ENABLED='YES' WHERE NAME='events_statements_current'; UPDATE setup_consumers SET ENABLED='YES' WHERE NAME='events_statements_history'; UPDATE setup_consumers SET ENABLED='YES' WHERE NAME='statements_digest'; UPDATE setup_consumers SET ENABLED='YES' WHERE NAME='events_transactions_current'; UPDATE setup_consumers SET ENABLED='YES' WHERE NAME='events_transactions_history';

As result, we will have the following consumers activated:

(root@localhost) [performance_schema]>select * FROM setup_consumers; +----------------------------------+---------+ | NAME | ENABLED | +----------------------------------+---------+ | events_stages_current | NO | | events_stages_history | NO | | events_stages_history_long | NO | | events_statements_current | YES | | events_statements_history | YES | | events_statements_history_long | NO | | events_transactions_current | YES | | events_transactions_history | YES | | events_transactions_history_long | NO | | events_waits_current | NO | | events_waits_history | NO | | events_waits_history_long | NO | | global_instrumentation | YES | | thread_instrumentation | YES | | statements_digest | YES | +----------------------------------+---------+ 15 rows IN SET (0.00 sec)

Final optimization for the pre-filtering is to decide IF we want to catch all the objects and reduce them to a subset. By default PS will use the settings below:

(root@localhost) [performance_schema]>select * FROM setup_objects; +-------------+--------------------+-------------+---------+-------+ | OBJECT_TYPE | OBJECT_SCHEMA | OBJECT_NAME | ENABLED | TIMED | +-------------+--------------------+-------------+---------+-------+ | EVENT | mysql | % | NO | NO | | EVENT | performance_schema | % | NO | NO | | EVENT | information_schema | % | NO | NO | | EVENT | % | % | YES | YES | | FUNCTION | mysql | % | NO | NO | | FUNCTION | performance_schema | % | NO | NO | | FUNCTION | information_schema | % | NO | NO | | FUNCTION | % | % | YES | YES | | PROCEDURE | mysql | % | NO | NO | | PROCEDURE | performance_schema | % | NO | NO | | PROCEDURE | information_schema | % | NO | NO | | PROCEDURE | % | % | YES | YES | | TABLE | mysql | % | NO | NO | | TABLE | performance_schema | % | NO | NO | | TABLE | information_schema | % | NO | NO | | TABLE | % | % | YES | YES | | TRIGGER | mysql | % | NO | NO | | TRIGGER | performance_schema | % | NO | NO | | TRIGGER | information_schema | % | NO | NO | | TRIGGER | % | % | YES | YES | +-------------+--------------------+-------------+---------+-------+ 20 rows IN SET (0.00 sec)

It is easy to understand that ANY object existing in the default Schema will be ignored. In our case, for now, we will keep it as it is, but this will be our next filtering step after we have analyzed some data. This will happen in the PART 2, stay tuned.

Conclusions

For now, you should understand what a Performance Schema is, its basic concept, as well as what interfaces are available and for what. You should also be able to compile the source code with and without PS, or part of it. You should be able to configure the MySQL configuration file correctly, and perform the initial configuration at runtime. Finally, you should know how to query the PS and how to dig in the information, which will also be discussed in the Part 2.